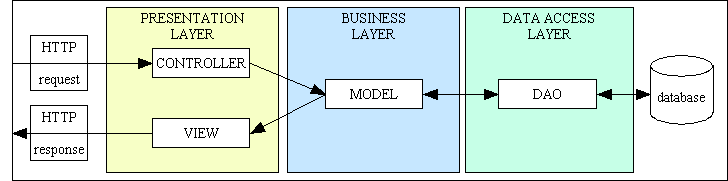

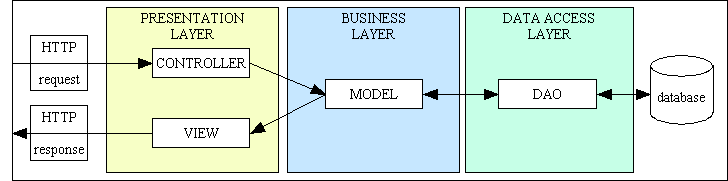

Figure 1 - The RADICORE architecture: MVC plus 3 Tier

This topic was covered first in A minimalist approach to Object Oriented Programming with PHP

In my experience those people who come out of university with a Computer Science degree rarely become top level programmers for the simple reason that they do not realise that programming is an art, not a science. Unlike science, in which you can be taught to follow certain scientific principles and produce the same results as everybody else, programming principles cannot be applied willy-nilly, they must be applied with skill. Programming is an art as it requires its practitioners to have a basic talent to begin with. Talent is not something which can be taught, it has to pre-exist within each individual. Just as you cannot grow a flower unless you start with a seed, you cannot make an artist out of someone who does not already have the seed of that art within them.

While a lot of "experts" attempt to reduce the art of programming into to a number of principles, practices and patterns, none of these can be applied "as-is" as each implementation has to be the result of each individual developer's understanding of their problem domain coupled with their ability to devise the best solution to that problem using their current programming language. The solution will rarely rely on a single principle, it will instead involve a marriage of several which can be linked together in any number of ways. The solution will start with the choice of programming language, of which there are many, which identifies the list of functions which the developer can use as the basic building blocks, but it is which of those functions is chosen, and in what order they are linked together and how they are linked together that matters. It is not possible to implement a principle or pattern with a single function, it may take dozens or even hundreds of lines of code.

So what is the difference between science and art??

For example, take a battery, two wires and a container of water. Connect one end of each wire to different terminals on the battery and place the other end in the water. Bubbles of hydrogen gas will appear on the negative wire (cathode) while bubbles of oxygen will appear on the positive wire (anode). This scientific experiment can be duplicated by any child in any school laboratory with exactly the same result. Guaranteed. Every time. Believe it or not the reverse is also true. Take a container and fill it with two parts hydrogen and one part oxygen, then ignite it. The result will be a release of energy in the form of a powerful explosion and the residue will be nothing but a drop of water.

Now ask ten developers to implement the Model-View-Controller (MVC) design pattern and they will produce ten different implementations. While each of these implementations may be effective in that they achieve a workable result it would the wrong to say that they are equal for the reason that "it works" is not the sole factor when a programmer's efforts are judged by others. It is the quality of the implementation which will be measured, and quality, just like beauty, lies in the eye of the beholder. Some will be perceived as beautiful while others will be perceived as ugly. Each artist will have their own particular style, which makes it possible to identify other works by the same artist because of that distinctive style. While "style" and "beauty" are words which are not used when describing scientific principles, they are used when describing a work of art.

Art is all about talent. It cannot be taught - you either have it or you don't. You may have a little talent, or you may have lots of talent. The more talented the artist then the larger the audience who will likely appreciate his work, and the more value his work will be perceived to have. Those with little or no talent will produce work of little or no value. A true artist can take a lump of clay and mould it into something beautiful. A non-artist can take a lump of clay and mould it into - another lump of clay. A skilled sculptor can take a hammer and chisel to a piece of stone and produce a beautiful statue. An unskilled novice can take a hammer and chisel to a large piece of stone and produce a pile of little pieces of stone. A skilled painter can take a canvas, some coloured oils and a brush, and create a beautiful portrait. An unskilled painter can create a canvas daubed with coloured oils that viewers will hardly recognise. It is not possible to teach an art to someone who does not have the basic talent to begin with. You cannot give a book on how to play the piano to a non-talented individual and expect them to become a concert pianist. You cannot give a book on how to write novels to a non-talented individual and expect them to become a novelist. You cannot give a book on how to write programs to a non-talented individual and expected to to become an expert programmer.

Some great artists find it difficult to describe their skill in such a way as to allow someone else to produce comparative works. Someone once asked a famous sculptor how it was possible for him to carve such beautiful statues out of a piece of stone. He replied: The statue is already inside the stone. All I have to do is remove the pieces that don't belong.

The great sculptor may describe the tools that he uses and how he holds them to chip away at the stone, but how many of you could follow those "instructions" and produce a work of art instead of a pile of rubble?

In her article The Art of Programming Erika Heidi says the following:

I see programming as a form of art, but you know: not all artists are the same. As with painters, there are many programmers who only replicate things, never coming up with something original.

Genuine artists are different. They come up with new things, they set new standards for the future, they change the current environment for the better. They are not afraid of critique. The "replicators" will try to let them down, by saying "why creating something new if you can use X or Y"?

Because they are not satisfied with X or Y. Because they want to experiment and try by themselves as a learning tool. Because they want to create, they want to express themselves in code. Because they are just free to do it, even if it's not something big that will change the world.

That is why my personal motto is "Innovate, don't Imitate". I don't copy the work of others when I know that I can do better. Anybody can copy the work of another, but it takes skill and talent to create something new. One of my early critics said the following:

There is nothing innovative in your system except where you have committed some kind of random design error.

He obviously hadn't noticed the following:

Have you seen anything similar in other frameworks?

In his article The Developer/Non-Developer Impedance Mismatch Dustin Marx makes this observation:

Good software development managers recognize that software development can be a highly creative and challenging effort and requires skilled people who take pride in their work. Other not-so-good software managers consider software development to be a technician's job. To them, the software developer is not much more than a typist who speaks a programming language. To these managers, a good enough set of requirements and high-level design should and can be implemented by the lowest paid software developers.

In his article Mr. Teflon and the Failed Dream of Meritocracy the author Zed Shaw says this:

You can either write software or you can't. [....] Anyone can learn to code, but if you haven't learned to code then it's really not something you can fake. I can find you out by sitting you down and having your write some code while I watch. A faker wouldn't know how to use a text editor, run code, what to type, and other simple basic things. Whether you can do it well is a whole other difficult complex evaluation for an entirely different topic, but the difference between "can code" and "cannot" is easy to spot.

He is saying that being able to write code on it's own does not necessarily turn someone into a programmer. It is the ability to do it well when compared with other programmers that really counts. Anybody can daub coloured oils onto a canvass, but that does not automatically qualify them to be called an artist. Anyone can assemble flat-packed furniture, but that does not turn them into a cabinet maker.

R. Buckminster Fuller wrote:

When I am working on a problem, I never think about beauty. I think only of how to solve the problem. But when I have finished, if the solution is not beautiful, I know it is wrong.

A well-written program is a thing of beauty, something that is easy to read and follow, especially by someone who was not the original author. A new reader should be able to quickly determine its structure and its logic, what it does and how it does it, and how control flows through the code. It should be obvious that only an artist can create a thing of beauty. In a procedural language spaghetti code is ugly code. The equivalent in an OO language is called lasagna code.

There are some disciplines which require a combination of art and science. Take the construction of bridges or buildings, for example: scientific principles are required to ensure the structure will be able to bear the necessary loads without falling down, but artistic talent is required to make it look good. An ugly structure will not win many admirers, and will not enhance the architect's reputation and win repeat business. Nor will a beautiful structure which collapses shortly after being built. A beautiful structure may be maintained for centuries, whereas an ugly one may be demolished within a few years.

Another difference between scientific and software principles is the way in which they are published.

To be accepted in the scientific community any new discovery must be fully documented and submitted to peer review before it is published in the appropriate scientific journal. This discourages charlatans from making false claims. Whenever a new procedure or technique is published the exact steps taken to produce the results are documented in full so that others can take the same steps and duplicate those results. That is science.

There is no equivalent in the programming community. There is no central publication which lists all the principles and practices, and there is no peer review before publication is allowed. A large number of patterns, principles or practices just provide a vague outline without providing any code which proves the efficacy of their claim. While some may include code samples, they are often not provided in the language that you currently use, and seasoned programmers will be aware that each different language has its own sets of advantages or restrictions.

For example, the Composite Reuse Principle (CRP) which is often stated as favour composition over inheritance

originated in those early languages which regarded subclassing (implementation inheritance) and subtyping (interface inheritance) as separate and mutually exclusive concepts. This meant that only virtual (abstract) methods were subject to dynamic dispatch and could be overridden, while non-abstract methods were subject to static dispatch and could not be overridden. This led to the expression Subclassing is for code sharing while subtyping is for polymorphism

which is discussed in more detail in Subclasses vs Subtypes. The notion that composition is better than inheritance depends entirely on the language that is being used. PHP allows every method, whether abstract or non-abstract, to be subject to dynamic dispatch, which makes this principle totally invalid. I have provided proof of this in Composition is a Procedural Technique for Code Reuse.

The ability to define or redefine best practices is not restricted to programmers who have mastered their craft. Anybody (and his dog) can publish whatever they like on the internet. As well as trying to create new practices they often spend a lot of time trying to promote their own poor interpretation of existing practices. This usually fails for one of two reasons:

Writing a program is like writing a book - it is a work of authorship. Writing a book that people will pay to read is not a simple matter of throwing thousands of words together which obey the rules of grammar. It must provide something of value to the reader, be it either education or entertainment. Writing a program is about taking an instruction manual that a human being can understand and translating it into an instruction manual that a computer can understand. The output of this exercise then depends on the quality of the input, or as we say in the computer world Garbage In, Garbage Out. This "translation" means taking high-level instructions written in a natural language and converting them into a low-level instructions in a computer language. If you take the instructions given to an office worker on how to produce an invoice from a sales order and then compare them with the equivalent instructions given to a computer you will see a huge difference. A computer may be significantly faster than a human, but it is still an idiot in that it can only do what it is programmed to do. It follows its instructions to the letter, and if there is anything wrong, missing or vague in those instructions then the results will probably be unexpected. While a human being will probably stop after realising that a mistake has been made, a computer will carry on repeating that mistake thousands of times a second until it is told to stop. This has led to the saying To err is human, but to really screw things up you need a computer

.

Computer programming requires talent - the more talent the better the programmer. It does not involve the simple conversion of the syntax of one language into the syntax of another. Different authors can use different words to convey the same meaning, just as different programmers can use different instructions to achieve the same results. A computer can only understand binary where each instruction is a collection of bits which are either ON or OFF, either 0 (zero) or 1 (one). Nobody writes binary code any more, they use an intermediate programming language which converts human-readable instructions into computer-readable instructions. The number of programming languages is immense, with their syntax being as different as Greek is to Chinese or to Egyptian hieroglyphics. There are different languages for different purposes, such as COBOL (COmmon Business-Oriented Language) for writing business applications, and FORTRAN (FORmula TRANslation) for mathematicians. While most of these languages provide features which aid in the production of software which is efficient and secure they are still unable to prevent bad programmers from writing bad code. This leads to the observation that You Can Write FORTRAN in any Language.

A successful programmer does not have to be a member of Mensa or even have a Computer Science degree, but neither should he be a candidate for the laughing academy or funny farm. A successful programmer will be a genuine artist and not a Piss Artist, one who does not do things correctly. A programmer must have a logical mind and must be able to think like a computer. Most large problems can be broken down into a series of simple steps, so if you concentrate on solving each of those steps one at a time you will soon complete your journey by taking the last step, by solving the last problem. Although the programmer has to work within the limitations of the underlying hardware and the associated software (operating system, programming language, tool sets, database, et cetera), the biggest limitation by far is his/her own intellect, talent and skill.

Although there are experienced developers who can describe certain "principles" or techniques which they follow in order to achieve certain results, these principles cannot be implemented effectively by unskilled workers in a robotic fashion. A good programmer has an open mind and has a flexible approach that allows him to adapt to changing circumstance. A bad programmer has a closed mind and an inflexible approach, often exhibiting such traits as Dogmatism and Pedantry in their rigid interpretation of what are supposed to be "best practices". These people typically do not understand what when appropriate

means, so they rely on the default behaviour of applying a rule indiscriminately instead of intelligently. Knowing if and when to apply a particular principle would be a good start, closely followed by knowing when to stop and thus avoiding the law of diminishing returns. Although a principle may be followed in spirit, the effectiveness of the actual implementation is down to the skill of the individual developer.

Simply following a set of rules in a dogmatic and pedantic fashion without understanding the circumstances under which they were created and the circumstances under which they should be applied will not turn a novice into an expert. The problem with copying what experts do without understanding what exactly it is they are doing and why they are doing it can lead to a condition known as Cargo Cult Programming or Cargo Cult Software Engineering. Just because you mimic the same procedures, processes and design patterns that the experts use does not guarantee that your results will be just as good as theirs. An intelligent programmer will not accept a rule on face value, he will examine the logic behind that rule, and if he determines that the logic is faulty or outdated then he may feel justified in ignoring that rule in favour of a better one. He will be (or should be) vindicated if he produces superior results. In OOP this could mean producing results with less code in shorter timescales and at a reduced cost. This is usually the product of having greater volumes of reusable code. Any practice which results in less reusable code should be re-evaluated and, if found wanting, should be discarded.

Some principles are so badly written that they do nothing but promote confusion instead of clarity. A prime example of this is Robert C. Martin's the Single Responsibility Principle (SRP) which had to be defined three times. In the first iteration it was described as:

A class should only have one reason to change.

In the second iteration it was described as:

This principle is about people.

but later in the same article he revised it by saying:

This is the reason we do not put SQL in JSPs. This is the reason we do not generate HTML in the modules that compute results. This is the reason that business rules should not know the database schema. This is the reason we separate concerns.

In a third iteration he redefined it to say:

GUIs change at a very different rate, and for very different reasons, than business rules. Database schemas change for very different reasons, and at very different rates than business rules. Keeping these concerns separate is good design.

As soon as I read the last two redefinitions I realised that what he was describing had all been covered before in the 3-Tier Architecture which I had encountered while using my previous language, and had already implemented it in the PHP version of my framework, so I decided to ignore what he wrote as it did not add anything of value. This did not prevent legions of novice programmers from continuing to follow a complete misinterpretation. Whilst it was obvious that the Single Responsibility Principle was aimed at modules which had more than one responsibility, they interpreted "more than one" as "does too much" which they could then measure by a module's size instead of its content. This is why some numpties say that a class should not have more than N methods, and each method should have no more than N lines of code

. By splitting up a large class which already exhibits high cohesion (on which Uncle Bob himself stated that SRP was based) into a collection of smaller fragments these numpties fail to realise that they had swapped one failure with two others as they were now violating both encapsulation (the act of placing data and ALL the operations that perform on that data in the SAME class) and cohesion (the functional relatedness of the contents of a module). Not to mention the fact that this fragmentation makes the code more difficult to read and therefore more difficult to maintain.

Note that in the last two quotations From Uncle Bob it shows that while he was writing about the separation of responsibilities he also referred to it as the separation of concerns. This proves that the SoC and SRP mean exactly the same thing, as does the 3-Tier Architecture.

Another source of confusion with badly-written programming principles is when someone takes a word that already has a well-understood meaning in one context and then gives it a different meaning in a totally unrelated context. For example, the term coupling describes how modules interact. When you have two modules where one module contains a call to the other then those two modules are coupled. The degree of coupling can either be regarded as loose or tight, with loose coupling being regarded as better than tight coupling as it provides for more reusability. In this context the term "decoupling", which is the same as uncoupling, should mean removing the call from one module to the other, which means that you end up with two modules which are no longer connected. This hasn't stopped some poorly educated individuals from conflating the term decoupling to mean the same thing as SRP or SoC, as in:

Code decoupling refers to breaking up the software into smaller components and reducing the interdependence between these components so that they can be tested and maintained independently.

How could any sensible person make such a glaring mistake? Giving two unrelated meanings to the same term causes nothing but confusion as when you encounter that term in a sentence you may accidentally assign the wrong meaning to it and take your mind down the wrong path.

If people redefining established principles with a different title and different words, and sometimes projecting a different meaning is not bad enough, there are some clueless newbies out there who add to the cesspit of knowledge by inventing totally bogus rules, such as:

One problem with programming principles is that the world of software covers many domains, such as operating systems, compilers, word processors, process control systems, gaming software and business systems, et cetera, which are totally different, which means that the practices employed in one domain may be totally inappropriate in another. I write nothing but administrative applications for businesses which are characterised by having HTML forms at the front end, an SQL database at the back end, and software in the middle to handle the movement of data between the two ends as well as the business rules. My primary languages in the first 20 years of my career were compiled, procedural, strictly typed and used a bitmapped Graphical User Interface (GUI), but my language of choice for the past 20 years has been interpreted, object oriented, dynamically typed, and uses an HTML interface. It has been my experience that practices devised for one type of language or problem domain do not work very well in another type. The reason why there are so many different languages is because each one is devised to make it easier to write software in a particular problem domain, and some language authors seem to think that they can make a better job of it than all those who came before. For example, PHP was created to make it easier to create dynamic web pages, and in that it has succeeded. It still hasn't stopped others from saying I can do better than that!

If you mention the word "patterns" to most programmers they immediately think of those Design Patterns written by the Gang of Four or Patterns Of Enterprise Application Architecture (PoEAA) written by Martin Fowler. They read about these patterns and try to implement as many as possible in their code. This is wrong. Even Erich Gamma, one of the co-authors of the GoF book says so. In How to use Design Patterns he wrote:

What you should not do is have a class and just enumerate the 23 patterns. This approach just doesn't bring anything. You have to feel the pain of a design which has some problem. I guess you only appreciate a pattern once you have felt this design pain.

Do not start immediately throwing patterns into a design, but use them as you go and understand more of the problem. Because of this I really like to use patterns after the fact, refactoring to patterns.

One comment I saw in a news group just after patterns started to become more popular was someone claiming that in a particular program they tried to use all 23 GoF patterns. They said they had failed, because they were only able to use 20. They hoped the client would call them again to come back again so maybe they could squeeze in the other 3.

Trying to use all the patterns is a bad thing, because you will end up with synthetic designs - speculative designs that have flexibility that no one needs. These days software is too complex. We can't afford to speculate what else it should do. We need to really focus on what it needs. That's why I like refactoring to patterns. People should learn that when they have a particular kind of problem or code smell, as people call it these days, they can go to their patterns toolbox to find a solution.

In the blog post When are design patterns the problem instead of the solution? T.E.D. wrote:

My problem with patterns is that there seems to be a central lie at the core of the concept: The idea that if you can somehow categorize the code experts write, then anyone can write expert code by just recognizing and mechanically applying the categories. That sounds great to managers, as expert software designers are relatively rare. The problem is that it isn't true.

The truth is that you can't write expert-quality code with "design patterns" any more than you can design your own professional fashion designer-quality clothing using only sewing patterns.

It is not just the mechanical use of design patterns which shows that the developer does not have the ability to determine whether their use is appropriate or actually beneficial, it is also the use of those programming principles, such as SOLID and GRASP, which are often acclaimed as being "best practices". But are they really the best? When I delved into the world of OOP I did not know that these principles existed, so I didn't follow them. I just used my instinct and my intuition to find ways to use Encapsulation, Inheritance and Polymorphism to increase code reuse and decrease code maintenance. When I was later informed that my code was considered to be complete rubbish as I had not followed these practices I examined them to find out what the fuss was all about. Upon close examination I discovered that some of these practices were totally inappropriate as they had been written for languages which were compiled, statically typed and used a bitmapped graphical display whereas the language I was using was interpreted, dynamically typed and used text-based HTML forms. As I had already written code which produced high levels of reusability I decided to ignore any principle or practice which, in my personal opinion, would decrease those levels of reusability. For a list of "best practices" which I refuse to follow, along with my alternatives, please read PHP Best Practices for Database Applications.

Instead of starting with a description of a pattern or practice and then trying to write the code to implement it you should avoid the premature use of design patterns. You should follow Erich Gamma's advice, as recorded in How to use Design Patterns:

Do not start immediately throwing patterns into a design, but use them as you go and understand more of the problem. Because of this I really like to use patterns after the fact, refactoring to patterns.

This sentiment is echoed in the article Design Patterns: Mogwai or Gremlins? by Dustin Marx:

The best use of design patterns occurs when a developer applies them naturally based on experience when need is observed rather than forcing their use.

But how exactly do you do refactor to patterns? The best advice I have ever seen was contained in Designing Reusable Classes which was published in 1988 by Ralph E. Johnson & Brian Foote. I should point out even though this paper was written more than a decade BEFORE I started work on my PHP framework I did not encounter it until more than a decade AFTER I had written it. Why was this you may ask? Simply because I did not know it existed due to the lack of back links on the internet which was my primary source of information. Despite my lack of OO training my instinct and intuition took me down the correct path.

Instead of trying to implement patterns which other people of dubious ability claim to have recognised, what Johnson and Foote advocate is that you start by writing your own code and get it to work before you start refactoring it. By "refactor" I do not mean that you look for places where you can inject somebody else's pattern or principle, you look for places in the code which you have already written where patterns of similar behaviour or structure may already exist. As Johnson and Foote wrote in their paper:

Useful abstractions are usually designed from the bottom up, i.e. they are discovered, not invented. We create new general components by solving specific problems, and then recognizing that our solutions have potentially broader applicability.

Finding new abstractions is difficult. In general, it seems that an abstraction is usually discovered by generalizing from a number of concrete examples. An experienced designer can sometimes invent an abstract class from scratch, but only after having implemented concrete versions for several other projects.

This has the ring of truth about it as I already had 20 year's worth of experience of writing database applications using several non-OO languages which all had a consistent set of similarities:

To my mind the word "pattern" refers to areas of code which contain similar logic or structure, where that logic or structure appears to be duplicated. Having identified a block of code which looks similar to another block of code you then employ a technique which Johnson and Foote call programming-by-difference where you extract the similar from the different, the abstract from the concrete, so that you can place the similar into a reusable module and the different into a unique module. Of particular importance is when you find that several objects share the same protocols (methods or operations) as this signifies that these objects are interchangeable and "plug compatible". To avoid duplicating these protocols in multiple objects they advise putting them into an abstract class which can then be shared by multiple concrete classes using inheritance. Thus you can think of an abstract class as being like a skeleton or template for a certain type of object. It provides a common structure and you just use a subclass to fill in the blanks.

Anybody with experience with database applications should immediately recognise the fact that every table in the database is subject to exactly the same set of CRUD operations. That identifies the similarities that can be placed in an abstract superclass, so all that remains is the ability to identify the differences and then isolate them in a series of concrete subclasses. The rules for creating SQL queries are the same regardless of what data is being handled, so a competent programmer should be able to create a standard routine to deal with those similarities. Likewise the rules for creating HTML documents are the same regardless of what data is being handled, so a competent programmer should be able to use a templating system to deal with those similarities. It would appear that the ability to spot similarities and patterns, and then to reduce them to reusable modules, is a skill which is sadly lacking in most of today's coders. The only patterns which they seem to be capable of recognising are those which have been publicised by someone else.

When learning how to use PHP's object oriented capabilities the first question I asked myself was "What is the difference between Procedural and Object Oriented Programming?" The basic answer is that when you create a procedural function you can only ever have one function with that name, but if you put that function inside an OO class it becomes a method which, although it must be unique within a class, can be duplicated in any number of other classes. The act of placing data and the operations which can be performed on that data in a class is known as encapsulation. If several classes share the same methods (operations) then those methods can be moved to a superclass which can then be shared with multiple subclasses using inheritance. When you have an application which contains hundreds of concrete table classes, being able to inherit large numbers of identical methods and volumes of boilerplate code from a single abstract class eliminates a huge amount of code that would otherwise have to be duplicated. This also means that a module which calls those methods on one object can be made to call the same methods on any number of different objects, thus providing polymorphism which results in a different implementation for each object.

Following this logic when I began experimenting with PHP I saw no reason why I should not create a separate concrete class for each table and inherit all the standard code from an abstract superclass which provided a set of common table methods. Note that I initially called this a "generic" class as PHP4 did not support the abstract keyword. This generic class also contained a set of empty common table properties to provide the physical characteristics of a particular table. These details are filled in by standard code in the constructor of each table subclass using data which is extracted from the database's own Information Schema.

Having provided polymorphism how do you take advantage of it? The best way of providing a mechanism for a module to switch to an alternative object before calling those methods is known as Dependency Injection. This meant that when I created a component, called a Controller, to call the insertRecord() method on a table class I created a separate component called a component script to provide the identity of the table class. If I have hundreds of tables in my database I can have a separate copy of this component script to provide the identity of a different table yet they will all share the same controller script. Using this approach I was able to avoid having a separate dedicated Controller for each of my 400 database tables and instead have a library of 45 reusable Controllers, one for each Transaction Pattern.

Note that this use of inheritance totally contradicts the Composite Reuse Principle (which I mentioned previously) which says that you should favour composition over inheritance

. When I read about this principle I was astounded that they were claiming that inheritance was bad without ever identifying exactly how it was bad. It took me years to discover that the reason was that if you extend a concrete class then you automatically inherit all of its methods, and some may contain implementations that you do not want. The problem is actually identified in that statement, which means that the solution should be plain to see - never inherit from a concrete class, only ever inherit from an abstract class. While this solution was blindingly obvious to me it would seem that it was beyond the intellectual capabilities of so many of my fellow programmers.

My use of an abstract class came in useful a short time later when I realised that sometimes I needed to supplement the generic code within each concrete subclass with some additional code to deal with the unique business rules. My previous language, which was UNIFACE, dealt with this by providing a series of triggers (events) which were automatically fired at pre-determined points in the processing flow. All you had to do was insert code into one of these empty triggers and it would be included in that processing flow. I duplicated this behaviour in my own PHP code by creating a series of empty methods in the abstract superclass which were called in pre-determined places. As they were always inherited they could be overridden with different implementations within each concrete subclass. I later discovered that I had implemented a version of the Template Method Pattern and that my empty methods were known as "hook" methods.

While a novice programmer can only pick patterns which have been identified by others and attempt to implement them, a more experienced programmer should be able to detect patterns within the code that he has written, possibly patterns which are invisible to others. My use of an abstract table class provides all the standard code in every concrete table class. This opened the door to vast amounts of polymorphism which I could exploit using my own version of dependency injection. This allowed me to create a library of just 45 page controllers which could be used to access any number of table classes in order to produce working transactions. This enabled me to build a library of Transaction Pattern which can be used as the basis for every transaction in every application subsystem. Unlike Design Patterns which offer nothing but a description of a pattern which each developer then has to implement themselves, with Transaction Patterns you can create a working implementation at the touch of a few buttons simply by linking a pattern with a database table. While the initial transaction includes basic data validation, the more complex business rules can be added to any table subclass using any of the "hook" methods which have been defined in the abstract class.

I have noticed that most programmers would not be able to recognise a pattern even if it crawled up their leg and bit them in the a**e. They require someone else to stick a flag in it which announces "THIS IS A PATTERN!". Yet there are some programmers whose pattern recognition abilities seem to be totally absent as if they had undergone some form of lobotomy. When I first published the existence of my Transaction Pattern in 2003 I was told that they could not possibly exist, because if they did then somebody famous would have already written about them. The fact that I had identified them, documented them, and provided the code to implement them just did not register in his tiny brain. I pity anyone who has a person like him on their team.

Science allows anybody to follow a particular set of rules and to achieve the same results as the person who wrote those rules. Art is completely different. It is not about the rules that you follow, it is about how you follow the rules and the results that you are able to achieve. Being able to produce a work of art that other people will appreciate requires an artistic talent to begin with. In order to be regarded as a "good" OO programmer you have to first understand the aims of OOP, why it was invented in the first place and why it is supposed to be better than all the other paradigms. While a lot of confused individuals will come up with some misleading descriptions, the truth is quite simple:

Object Oriented Programming is programming which is oriented around objects, thus taking advantage of Encapsulation, Inheritance and Polymorphism to increase code reuse and decrease code maintenance.

The ability to create more reusable code is the key. This is not just my opinion. If you read Designing Reusable Classes which was published in 1988 by Ralph E. Johnson & Brian Foote you will see the following opening statement:

Since a major motivation for object-oriented programming is software reuse, this paper describes how classes are developed so that they will be reusable.

Why is reusable code so important? Because the more reusable code you have at your disposal the less code you have have to write to get the job done, which means that you can get the job done in less time and at a lower cost. This equates to higher productivity. Creating reusable code requires that you follow the Don't Repeat Yourself (DRY) principle and replace multiple copies of the same code with a single copy that can be accessed multiple times. While the ability to spot blocks of code which are exact duplicates is very easy (there are a variety of static analysis tools which do just that) the ability to spot blocks of code which are not identical yet have significant numbers of similarities is not so easy.

As I have already mentioned above in the correct use of patterns and principles this requires the ability to separate the similar from the different, the abstract from the concrete using a technique described by Johnson & Foote as programming-by-difference which results in the similar being moved to reusable modules (such as an abstract superclass) and the different being moved to unique modules (such as concrete subclasses). While this technique can be described in simple terms its implementation turns out to be not so simple. In their paper Johnson and Foote said the following:

Even our researchers who use Smalltalk every day do not often come up with generally useful abstractions from the code they use to solve problems. Useful abstractions are usually created by programmers with an obsession for simplicity, who are willing to rewrite code several times to produce easy-to-understand and easy-to-specialize classes.

...

Decomposing problems and procedures is recognized as a difficult problem, and elaborate methodologies have been developed to help programmers in this process. Programmers who can go a step further and make their procedural solutions to a particular problem into a generic library are rare and valuable. [O' Shea et. al. 1986]

This is why, when I spotted that I had two table classes each of which had identical methods to implement the ubiquitous CRUD operations, I moved those methods to an abstract superclass so that I could share them with multiple concrete subclasses using inheritance. Note that what I did NOT do, unlike so many of my poorly educated brethren, was to create separate methods to load(), validate() and store() as I learned in my COBOL days that if you always have to execute a group of functions in a particular sequence then it would be more efficient to place that sequence in its own high-level function, thus replacing multiple calls with a single call. Over time I have added more methods to my abstract class, specifically to add "hook" methods which are an essential part of the Template Method Pattern. This has become the very heart of the RADICORE framework as it provides huge amounts of polymorphism which I can then take advantage of in my collection of reusable Controllers and Views using dependency injection.

An alternative to creating abstract classes which can be shared using inheritance is to create objects which can be called to perform the same logic but on different sets of data, such as using templates to create HTML documents. In the 20 years I had spent on the development of business applications prior to switching to PHP I had worked on enough programs to notice recurring patterns. Each screen, plus the logic for handling that screen, could be broken down into three areas - structure, behaviour and content where the structure and behaviour share similarities, which means that they can potentially be supplied from reusable modules, with only the content being different. Because of some previous exposure to XML and XSL I was able to create a series of reusable XSL stylesheets and then build a standard component which fills the selected stylesheet with data.

If turning useful abstractions into a generic library is considered to be difficult, then the next step, which is creating a reusable design in the form of a framework, is considered to be even more difficult. Johnson and Foote said the following:

One of the most important kinds of reuse is reuse of designs. A collection of abstract classes can be used to express an abstract design. The design of a program is usually described in terms of the program's components and the way they interact.

An object-oriented abstract design, also called a framework, consists of an abstract class for each major component. [see note #1 below]

Since frameworks provide for reuse at the largest granularity, it is no surprise that a good framework is more difficult to design than a good abstract class.

I created my first reusable library in COBOL in the early 1980s. I upgraded this into a framework in the mid 1980s when I created a Role Based Access Control (RBAC) system which allowed a user to only see and execute those programs to which access had been granted. In 2002 I began creating a PHP version of this framework which now contains the standard components shown in Figure 1 below:

Figure 1 - The RADICORE architecture: MVC plus 3 Tier

Each of the boxes in the above diagram is a hyperlink which will take you to a full description. A more detailed picture is also available.

Notes from the RADICORE implementation:

How much code in an application can be regarded as a candidate for reusability? It has been recognise by many people that the only code which is perceived by the customer to have any value is that which executes the business logic which is unique to each entity. Everything else is nothing more than boilerplate which takes you to the place where the business logic is executed. While the function of this boilerplate code is the same within different user transactions it is only the data being moved around which is different. This means that the ultimate aim of an OO programmer, something which separates the men from the boys, the ninjas from the numpties, would be to eliminate the need to write as much boilerplate as possible. So how much can "as much as possible" be? Would it be 10%, or 20%, or even 50%? How about 100%?

Believe it or not the RADICORE framework provides 100 PERCENT of the boilerplate code. The class file for each database table is generated from a function within the Data Dictionary. Individual user transactions are also generated from a different function by linking a database table with a Transaction Pattern and pressing a button. This creates a basic but working transaction, which can be immediately activated from the menu system, without the need to write any code - no PHP, no HTML, and no SQL. The only code the developers have to write themselves is the unique business logic which they insert into the relevant "hook" methods which can be overridden in any of the table subclasses.

So, according to Johnson and Foote a programmer who can create a good abstract class is rare and valuable

and one who can create a good framework is even more rare and valuable

. As I have managed to create both shall I put this on my CV? You should note that I did not acquire these skills by learning from "experts" as I was self taught.

In spite of the fact that I had apparently achieved what I was supposed to achieve with my implementation of OOP, it did not take long for the paradigm police, those paragons of programming purity, to point out that everything I did was wrong. If you read what they wrote you should see that some of their rules are totally fictitious, some are poor interpretation of sensible rules, while others are sensible rules being used in inappropriate circumstances. This is what led me to state the following as far back as 2014:

Some people know only what they have been taught while others know what they have learned.

I was not taught to follow those rules which is why I ignore them. By experimenting with various options I settled on those which produced the best results with the minimum amount of code and the maximum amount of reusability. My results have turned out to be better than their "best". When I was told You should be following the same rules as the rest of us!

the wording of my response may have varied over the years, but the sentiment has remained the same:

If I were to do everything the same as you then I would be no better than you, and I'm afraid that your best is simply not good enough. In order to do better I have to start by being different, yet all you and all your cronies can do is say "Your methods are different, so they must be wrong".

That may be what you laughingly call "best practices" in your neck of the woods, but from where I'm standing your neck of the woods is simply the place where the bears go to take a dump. That is why you are ankle-deep in bear crap and I'm knee-deep in roses.

You tell me that if I followed the advice of these experts I would bestanding on the shoulders of giants, but as their advice is not worth the toilet paper on which it is written I would instead regard it aspaddling in the poo of pygmies.

Here endeth the lesson. Don't applaud, just throw money.

The following articles were all written by me: